Beyond the Lab: Why Motion Capture Needs a New Architecture

FPGA-synchronized wearable sensing in an effortless UX delivers lab-grade kinematics in something you'd actually want to wear

~4 min

The gold standard for understanding how humans move costs a quarter million dollars, lives in a windowless lab, and requires an expert to glue reflective balls to your body. It produces extraordinary data. Almost nobody can use it.

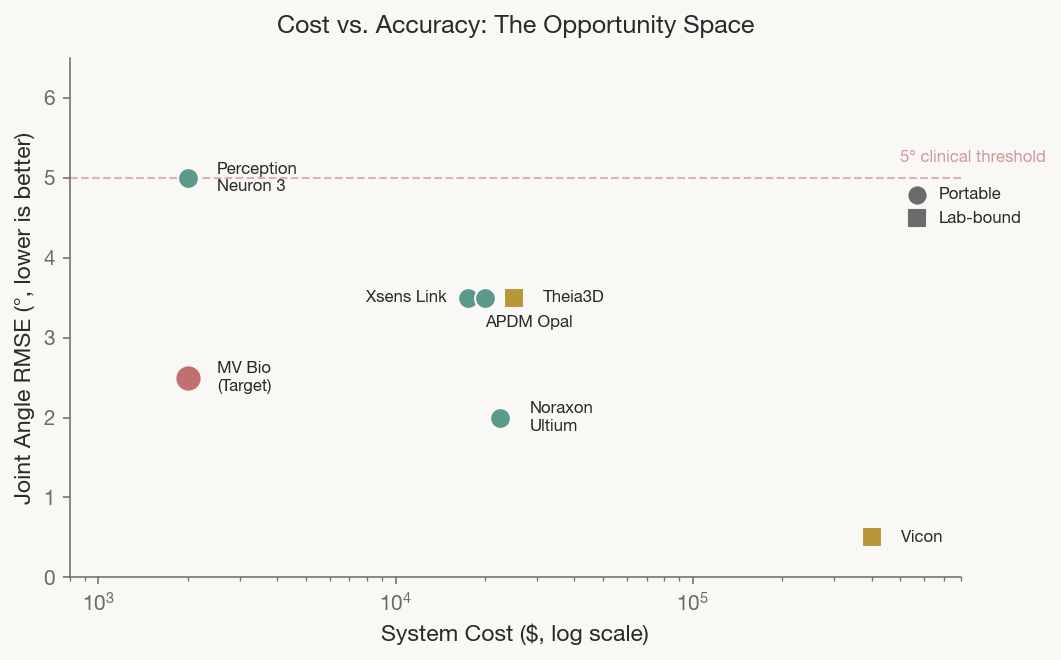

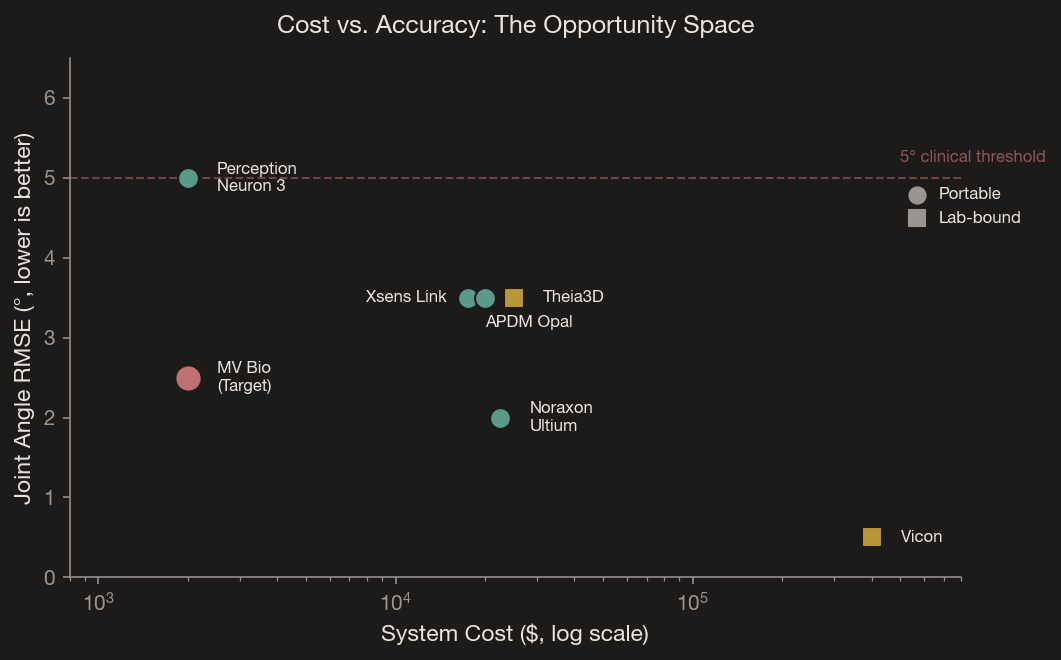

Meanwhile, the wearable systems that are portable force a quiet tradeoff: convenience for precision, in ways that compound invisibly across the kinematic chain. We’re building a system that refuses that tradeoff — clinical-grade accuracy in something you’d actually want to wear.

The Challenge is Structural

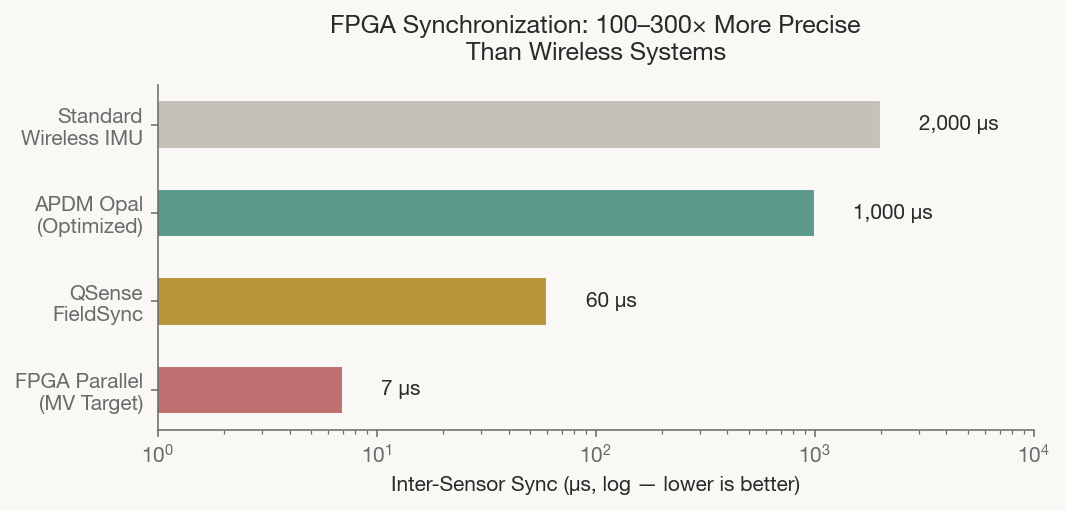

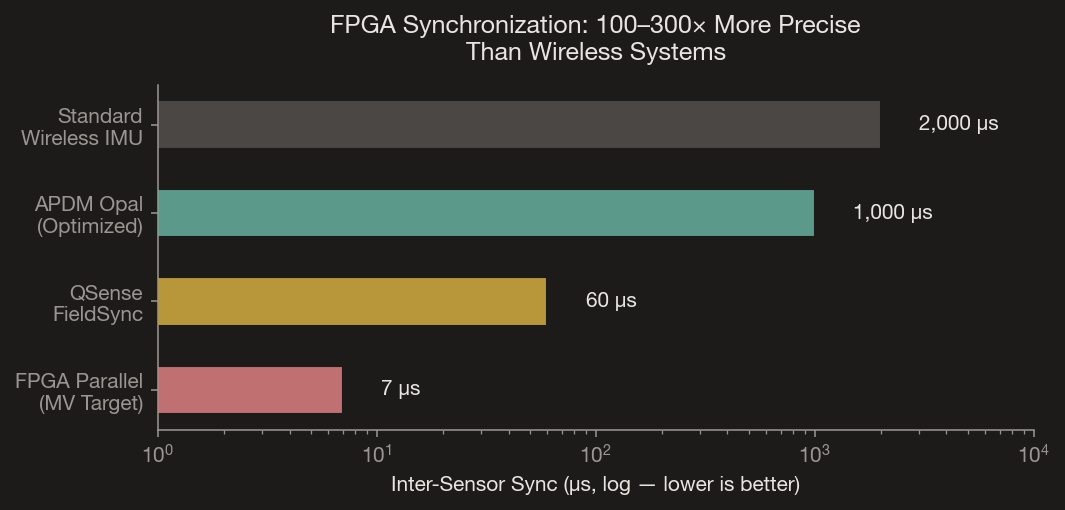

When a wearable suit samples 15+ sensors wirelessly, each reading arrives at a slightly different moment — typically 1–2 milliseconds apart. At the angular velocities of normal walking (~200°/s), a 1ms timing mismatch produces 0.2° of error per sensor pair. Propagated through a 7-joint lower body model, these errors stack to roughly 1.4° of cumulative kinematic error before sensor noise enters the picture. The clinical accuracy threshold for gait analysis is 5° RMSE. Synchronization jitter alone can consume a quarter of that budget.

The Insight: Make Synchronization a Hardware Guarantee

We replaced software-interpolated timing with deterministic hardware synchronization. An FPGA drives all sensors on a shared parallel bus — every IMU is sampled within microseconds of every other. This isn’t a calibration trick. It’s an architectural decision that removes an entire category of error at the physics layer.

The system reads 18+ ultra-low power IMUs simultaneously across dedicated SPI data lines, with the FPGA broadcasting a common clock and chip-select. Each BMI270 embeds a 24-bit sensortime counter in every data frame, enabling sub-sample timing reconstruction independent of USB polling jitter. An STM32 MCU handles DMA transfer, embedded DSP, and real-time sensor fusion.

For the technically curious At <10µs synchronization, cumulative chain error drops to <0.014° — two orders of magnitude below the clinical threshold. Calibration and sensor noise become the sole accuracy limiters (something which we’re also working on some pretty cool solutions for).

Designed to Disappear

Precision means nothing if the system stays in a drawer. Our wearable systems can integrates into a variery of athletic performance annd compression clothing — lightweight, form-fitting, durable, and so unobtrusive you don’t even notice it. The UX is deliberately minimal: insights about your biomechanics surface when they matter, without bombarding you with dashboards or raw data streams. Clinicians can always look under the hood to get the depth they need. Individuals get clarity without complexity. Researchers get full data access without setup friction. The goal is a system that feels less like medical instrumentation and more like something you just put on.

The Real Opportunity

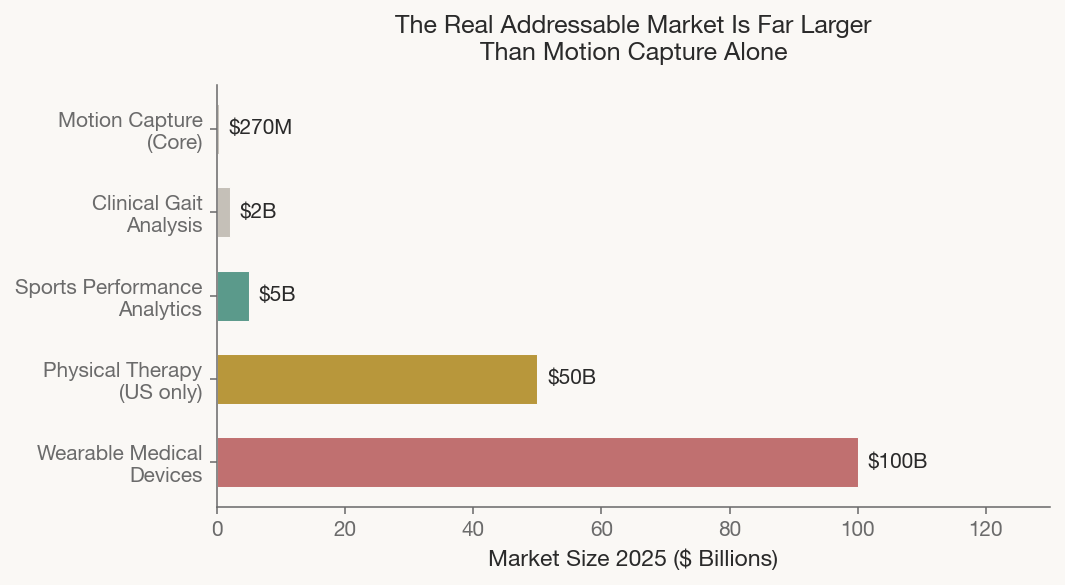

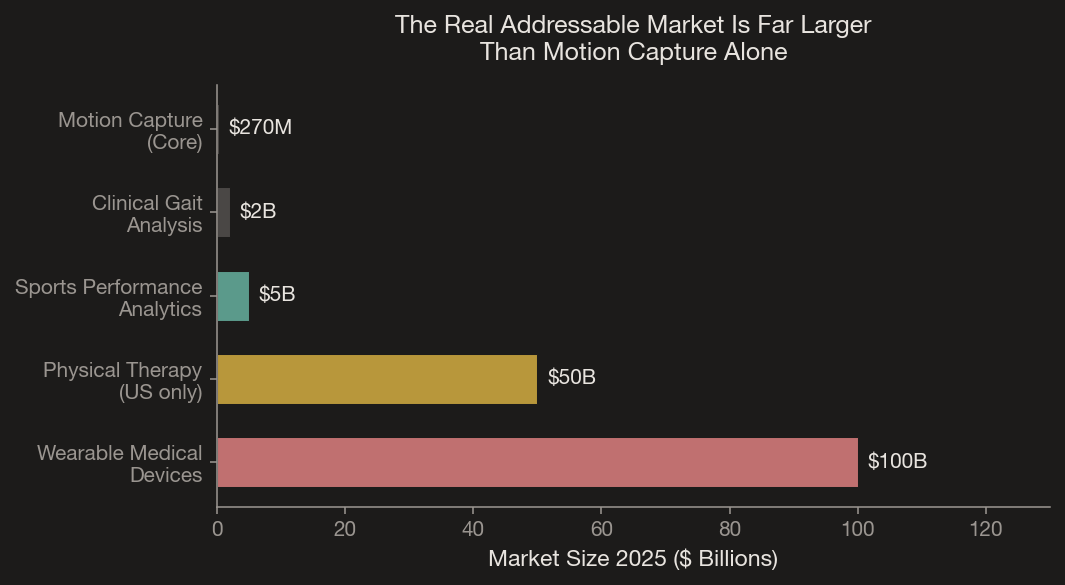

The motion capture market (~$270M) is a fraction of the addressable space. Clinical gait analysis, sports analytics, physical therapy, and occupational ergonomics represent a combined market exceeding $50 billion. And this is before we account for the robotics market. In November 2025, mo-cap companies like Xsens started pivoting explicitly to humanoid robotics data, signaling that wearable motion capture is becoming infrastructure far beyond its traditional markets. More detail on this topic in Part 3 of this series.

Our FPGA-synchronized architecture, embedded intelligence, and multi-market positioning offer differentiation current systems lack. All at the intersection of clinical-grade accuracy, genuine wearability, and sub-$2,000 cost.

What’s Next

Phase I target: <2.5° RMSE across lower-body joints during dynamic movements sampled in parallel at >300Hz and sub 10us inter-sensor synchronization (10+ sensors), validated against optical ground truth. But capturing motion with precision is only half the story. What matters is what you do with that data. In Part 2, we explain how reinforcement learning transforms raw kinematics into a personalized movement coach.

- Grand View Research, “3D Motion Capture Market Size, Share, Growth Report, 2030.”

- McGinley et al. (2009), “The reliability of three-dimensional kinematic gait measurements.” Gait & Posture, 29(3), 360–369.

- Nikolic et al. (ICRA 2014), “A Synchronized Visual-Inertial Sensor System with FPGA Pre-Processing.”

- Bosch Sensortec, “BMI270 Datasheet, BST-BMI270-DS000-07,” March 2023.

- Scientific Reports (2025), “Validated low-cost standardized VICON configuration.”

- Grand View Research, “U.S. Physical Therapy Services Market Size Report, 2033.”