The Adaptive Movement Coach: AI That Learns What Optimal Looks Like — For You

Your digital twin, your personal gold standard, and an AI assistant that bridges the gap

~4 min

A physical therapist watches you walk for thirty seconds and makes a judgment call. That judgment — based on training, experience, and a quick visual impression — is how most musculoskeletal healthcare gets delivered. It’s subjective, non-repeatable, and blind to the subtle asymmetries that precede injury by months.

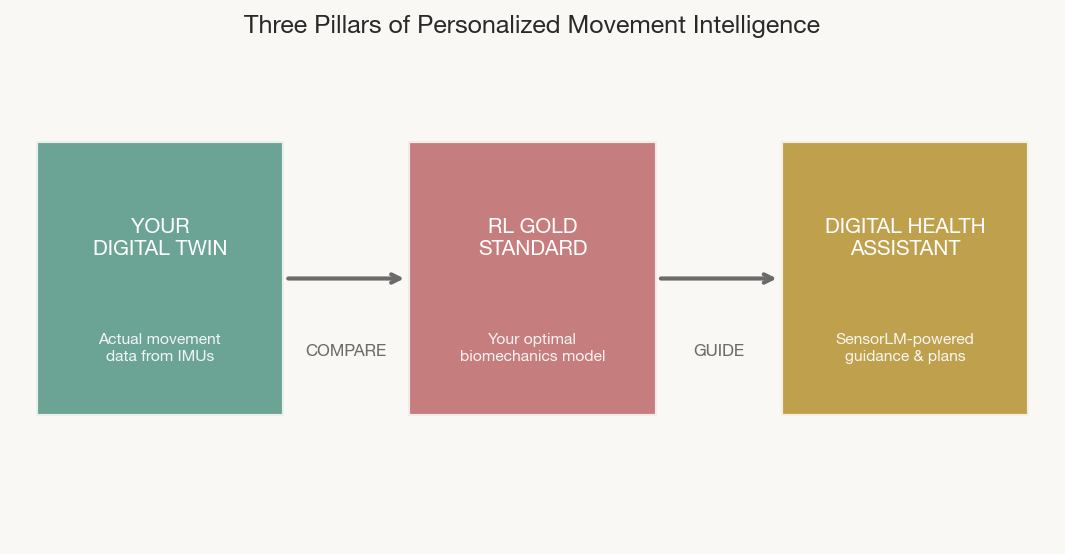

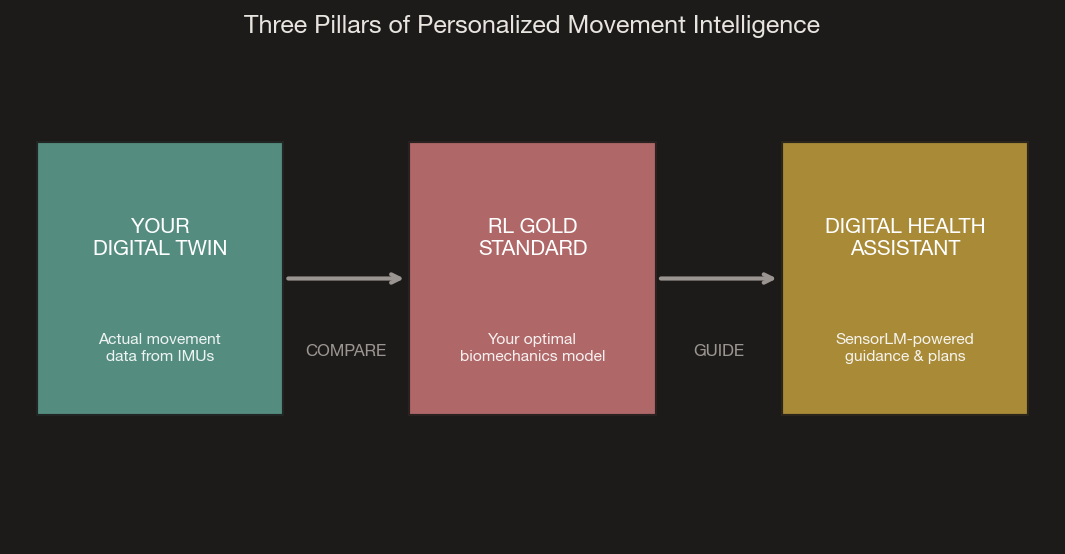

We’re building something different: a system with three components that work together to become your personal biomechanical intelligence.

Three Parts, One System

Your Digital Twin is your actual movement data — the real-time kinematic profile captured by our IMU array as you squat, walk, lunge, and rotate. This is a faithful digital mirror of how you actually move, updated continuously.

Your RL Gold Standard is a reinforcement learning model that simulates your optimal biomechanics — not a population average, but the best achievable movement patterns for your specific body. It’s computed from cutting-edge musculoskeletal research, parameterized to your height, weight, limb segment lengths, and age, across the seven fundamental movement patterns: squat, hinge, lunge, gait, push, pull, and rotation.

Your Digital Health Assistant compares your twin against your gold standard, finds the gaps, and — powered by sensor-language AI — explains what’s happening and what to do about it in plain language.

How the Gold Standard Works

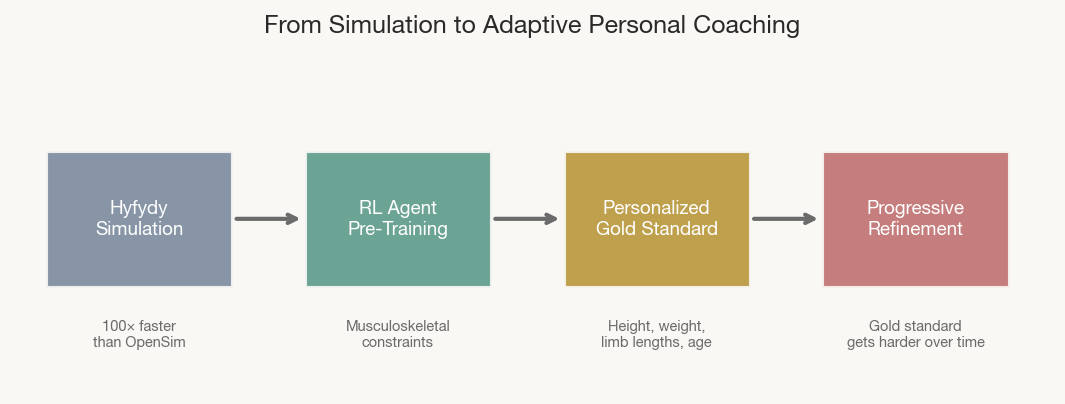

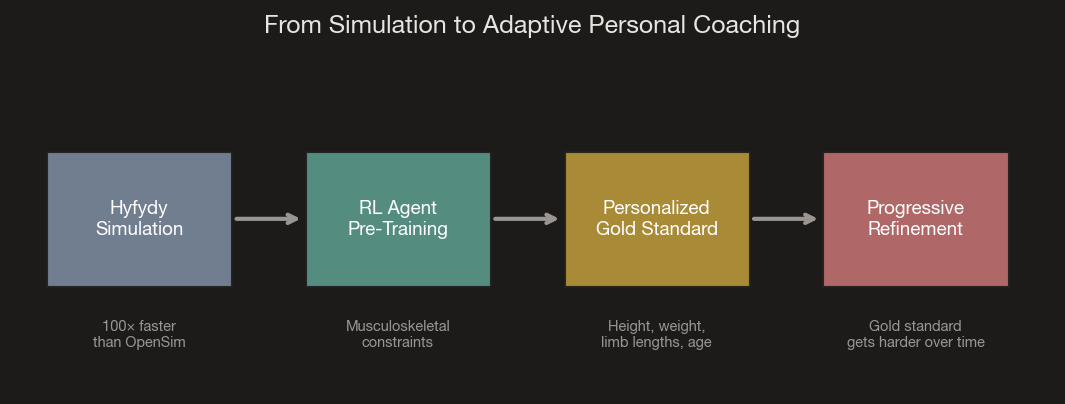

The RL agent is pre-trained in Hyfydy, a biomechanical simulation engine that achieves OpenSim-grade musculoskeletal accuracy at 100× the speed. The agent learns movement patterns that are physiologically valid — penalized for excessive joint loading, rewarded for efficiency and stability — then personalized to your anthropometrics.

Crucially, the gold standard is independent of your captured motion data. It represents where you’re headed, not where you are. As you improve and approach your current targets, the model progressively advances — slightly greater ranges of motion, more efficient coordination — like a coach who raises the bar as you clear it. Think progressive overload, applied to movement quality.

For the technically curious DEP-RL (Schumacher et al., ICLR 2023) solved the exploration problem in musculoskeletal systems with 90+ muscles, enabling natural bipedal walking from energy-minimization objectives alone — validated in both Hyfydy and MuJoCo.

Making It Intuitive

Raw kinematic data is meaningless to most people and many clinicians. Google’s SensorLM (NeurIPS 2025) demonstrated that foundation models trained on 60 million hours of wearable data can translate sensor streams into natural language. We’re integrating similar sensor-to-language capabilities so the assistant doesn’t say “knee valgus exceeded 8°” — it says “your left knee is collapsing inward during landing. Try engaging your glutes more during push-off.”

The assistant can generate personalized movement plans, track progress over time, and answer questions about your biomechanical health conversationally. All of it packaged in a UX designed to be effortlessly simple — the complexity lives under the surface, the experience stays clean.

The Clinical Evidence

The evidence supports this approach across domains. Real-time gait retraining with biofeedback reduced running injuries by 62% in a randomized control trial of 320 runners. Wearable accelerometry now detects prodromal Parkinson’s up to seven years before clinical diagnosis. IMU-based Parkinson’s monitoring has achieved FDA clearance. But none of these systems learn the individual — they apply population models to individual bodies.

The missing piece isn’t sensing or even AI. It’s the combination: a personalized optimal model you’re working toward, a digital record of where you are, and an intelligent layer that bridges the two, all in something you’d want to wear every day.

What’s Next

Phase I delivers the integrated lower-body system with a validated RL agent, real-time monitoring and gold standard comparison at <500ms latency, and natural-language interaction. All benchmarked against optical motion capture. This same data pipeline feeds directly into our companion effort: building the data infrastructure for the next generation of embodied AI. Read more in Part 3.

- Schalkamp et al. (2023), “Wearable movement-tracking data identify Parkinson’s disease years before clinical diagnosis.” Nature Medicine.

- Schumacher et al. (2023), “DEP-RL: Embodied Exploration for RL in Overactuated and Musculoskeletal Systems.” ICLR 2023.

- Luo et al. (2024), “Experiment-free exoskeleton assistance via learning in simulation.” Nature.

- Zhang et al. (2025), “SensorLM: Learning the Language of Wearable Sensors.” NeurIPS 2025.

- Chan et al. (2018), “Effect of gait retraining on running injuries.” Am J Sports Med.

- Hyfydy — High Fidelity Dynamics. https://hyfydy.com