From Human Movement to Machine Intelligence: Building the Data Layer for Physical AI

The robotics industry has a $5B data problem. Our solutions combine IMU motion capture with fingertip force sensing to capture both the movement and the touch of skilled manipulation — the missing data dimension for dexterous robotics.

~4 min

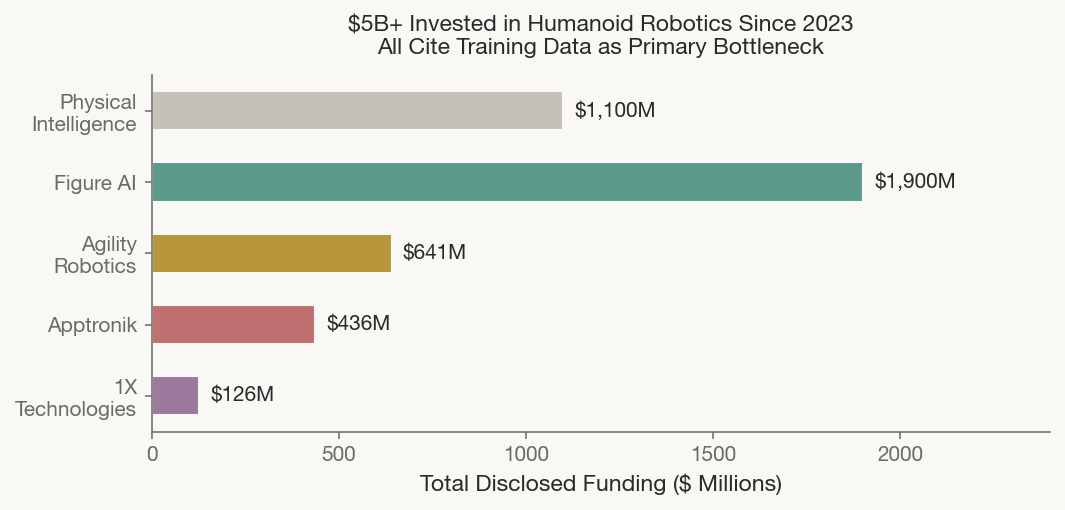

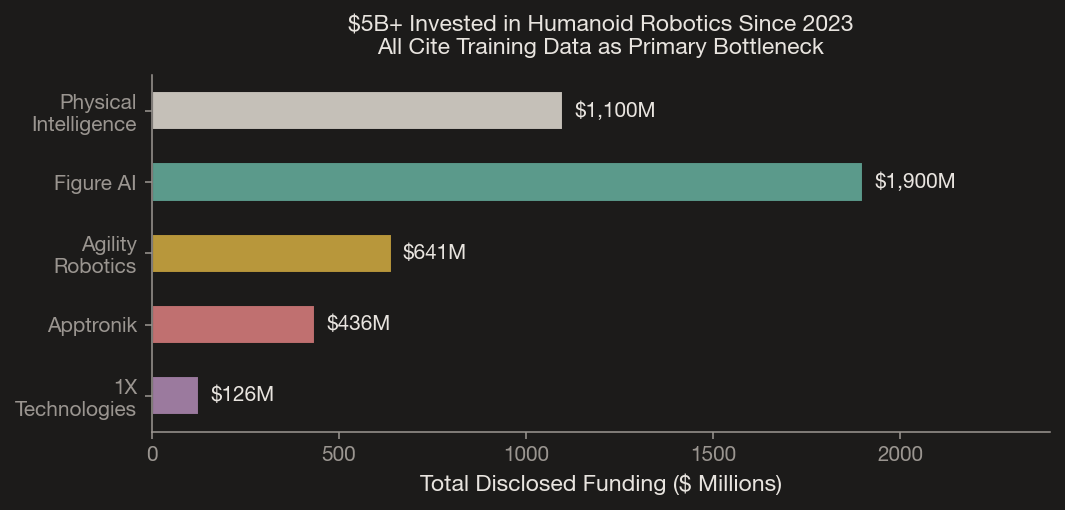

Physical Intelligence raised $1.1 billion to teach robots to fold laundry. Figure AI raised $1.9 billion to put humanoids in factories. Tesla is begining to manufacture Optimus at Fremont. Collectively, the humanoid robotics industry has attracted over $5 billion since 2023.

Every one of these companies says the same thing about their biggest bottleneck: data.

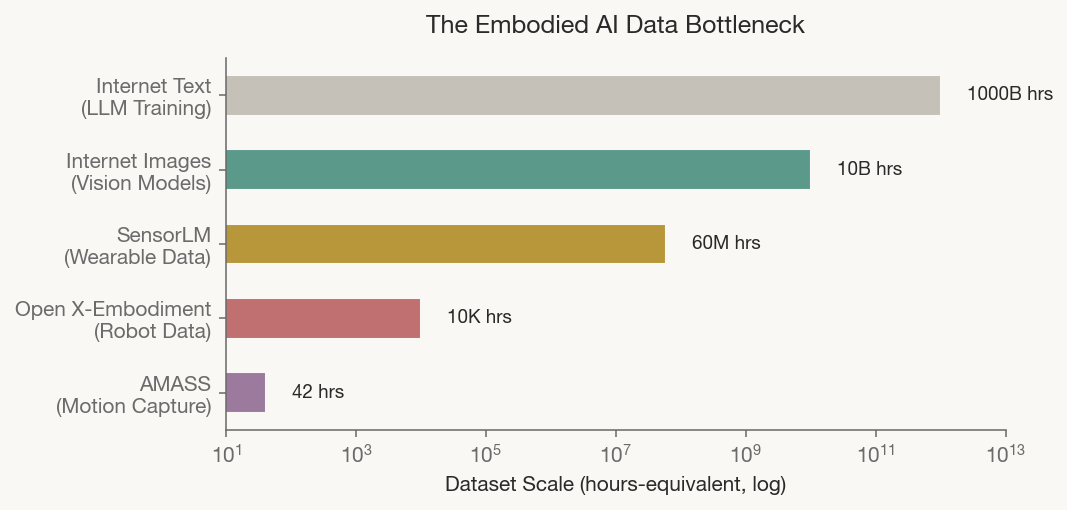

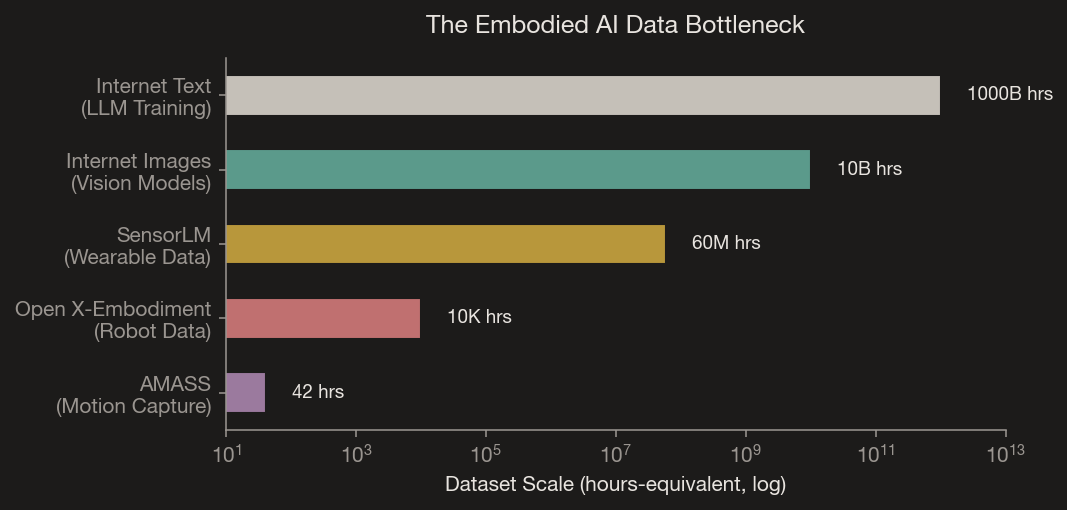

The Scale Problem

Large language models train on trillions of tokens scraped from the internet. The largest open robot manipulation dataset — Open X-Embodiment — contains roughly 10,000 hours. The largest unified human motion capture dataset, AMASS, contains 42 hours. That’s not a gap, we’re talking about building from near-zero.

Unlike text, robotic manipulation data cannot be scraped from the web. It must be collected one interaction at a time, in the physical world, with calibrated sensors. Physical Intelligence’s $600M Series B was explicitly for “collecting more data.” The infrastructure race is on.

Why Touch Is the Missing Dimension

Most robotic training data captures what a hand does. Its position, trajectory, and joint angles. What it almost never captures is what a hand feels. This matters enormously. Humans don’t pick up an egg the same way they pick up a wrench. The difference isn’t in just in the trajectory, but in the force too.

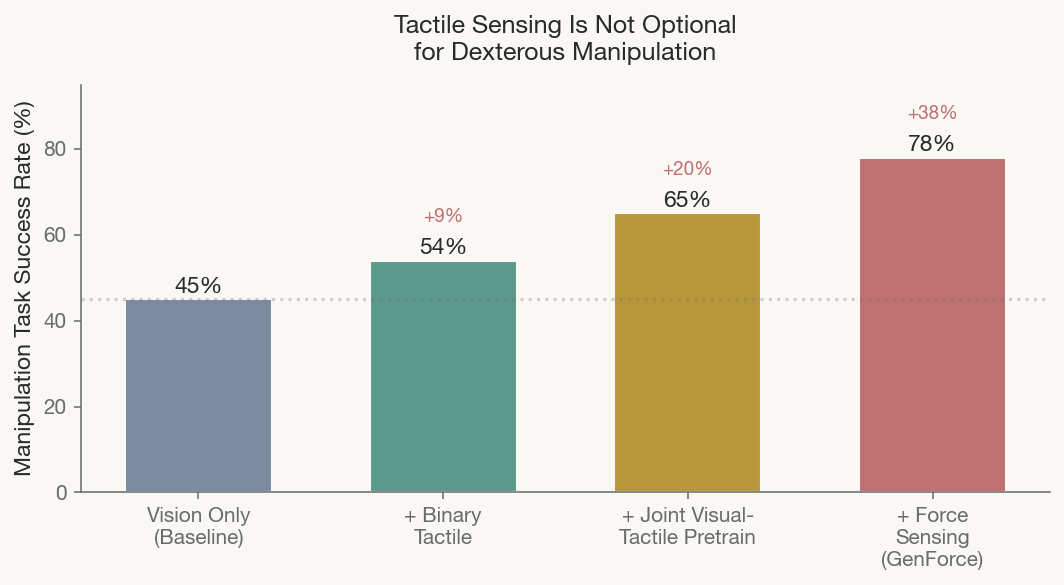

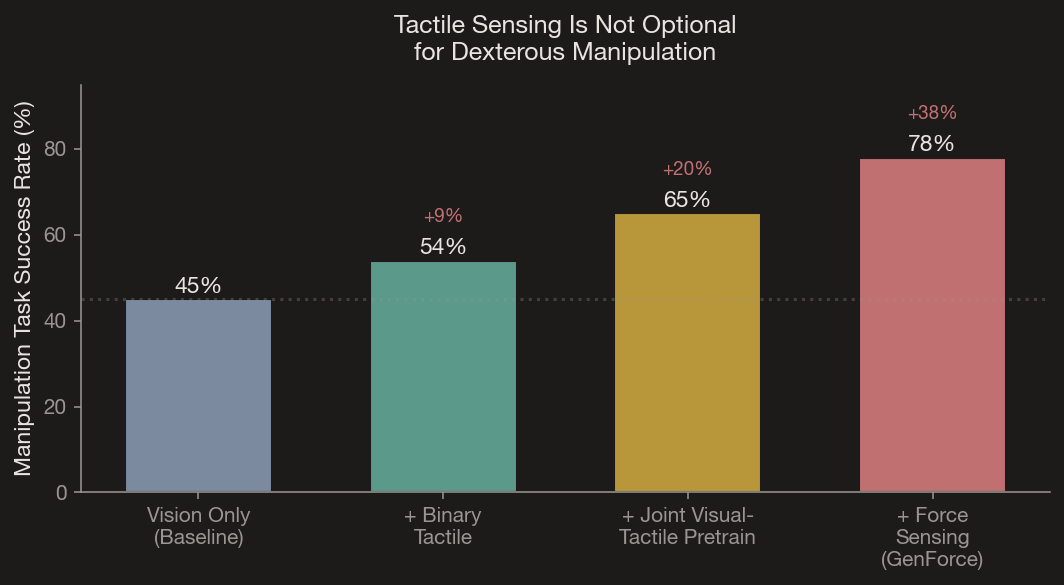

Recent research quantifies just how critical tactile data is for manipulation. VTDexManip (ICLR 2025) found that adding even binary touch signals to vision-only policies improved dexterous task success by approximately 20%, with joint visual-tactile pretraining roughly doubling that total gain. Hu et al. (2025) went further, finding that tactile feedback mattered more than visual feedback for in-hand manipulation of slender objects. The implication is clear: for precision tasks, motion data alone isn’t enough. Force and contact are essential dimensions.

For the technically curious GenForce (Nature Communications, 2026) demonstrated transferable force sensing across heterogeneous tactile sensors using unified marker representations — analogous to how the somatosensory cortex encodes touch across different skin regions. This means force models trained on one sensor type can transfer to others without exhaustive recollection.

The G-1 Glove

Our G-1 glove targets the sharpest data gap in embodied AI: dexterous manipulation with force context. It combines synchronized IMUs across all phalanges with piezoresistive force sensors at every fingertip, capturing both the movement pattern and the contact dynamics of skilled manipulation.

Existing gloves optimize for haptic feedback (HaptX, $5,500/pair, tethered to a pneumatic pack) or motion capture (Manus Quantum, $2,100–$5,000). None combine high-DOF finger tracking, fingertip force sensing, and a wearable form factor designed for hours of real-world data collection at accessible cost. The G-1 sensors are also FPGA-acquired in parallel with the same data acquistion platform as our lower-body system, and annotated through automated sensor-to-language pipelines for an additonal encoding.

Our same platform that can provide clinical grade full body human movement data can also be used to generate the movement and manipulation training data robotics companies need.

Software for Humans and Agents

Jensen Huang described physical AI as addressing a “$50 trillion industry largely void of technology.” This insight that software must serve both human developers and AI agents is one we’ve internalized. Our SDK is designed for dual consumption: human-readable interfaces alongside MCP & CLI compatible tool definitions that allow AI agents to discover and build with our sensing solutions programmatically. Agents can use the system to collect data, train on it, and deploy improved models all through the same API surface.

The Flywheel

More users generate more diverse movement and manipulation data. Better data trains better models. Better models produce better feedback. Better feedback attracts more users. The hardware platform is the engine that spins this flywheel and the companies spending billions on robot foundation models can benefit from exactly what it captures.

- Physical Intelligence Blog, π0 / π0.5 / π*0.6 / RL Token / MEM. https://www.pi.website/blog

- Open X-Embodiment, “Robotic Learning Datasets and RT-X Models.” ICRA 2024.

- AMASS Dataset, Max Planck Institute. https://amass.is.tue.mpg.de

- VTDexManip (ICLR 2025), “A Dataset and Benchmark for Visual-Tactile Pretraining and Dexterous Manipulation.”

- Chen et al. (2026), “Training Tactile Sensors to Learn Force Sensing from Each Other.” Nature Communications.

- Hu et al. (2025), “Dexterous in-hand manipulation of slender objects through deep RL with tactile sensing.” Robotics & Autonomous Systems.

- Lepora (2024), “The Future Lies in a Pair of Tactile Hands.” Science Robotics.

- MANUS, “RoboBrain-Dex: Egocentric Data Collection for VLA Models.”